Draper’s Human Systems Technology capability enhances the success of our users’ most critical missions through user-centered design, quantitative user-state modeling and seamless integration into the systems they depend on most.

Draper accomplishes this by working side by side with users while designing their tools, modeling how they perform their missions by extracting data from these software and hardware tools and by monitoring their neurophysiological states. We utilize our skills in the design, development, and deployment of systems to support cognition – for users seated at desks, on the move with mobile devices or maneuvering in the cockpit of vehicles – and systems that enable and enhance collaboration, both across human-human and human-technology teams.

Manned-unmanned teaming (MUM-T) offers enormous potential to increase capacity and reduce risk in military, space, disaster response, and other high-stakes missions. To realize this potential, advances in autonomy as both tools and teammates must consider the human teammates—their needs and limitations, and the ways those change during mission operations. For the promises of human-machine teaming (HMT) to be realized, a shift of focus is needed from greater operational autonomy (operating with less human involvement) toward greater autonomous teaming capabilities (operating with higher productivity with a human teammate).

Draper designs and develops human-centered autonomy and adaptive systems architectures to support client missions. The key to successful implementation of such solutions includes seamless integration of these capabilities with human teammates, leveraging expertise in human-machine perceptual, computational, and physical capabilities and limitations. Draper takes a systems-level approach to optimize task sharing and team behaviors across human-machine teammates, ensuring combined capabilities across the team can meet complex task and mission goals within environmental constraints.

Our user-centered approach to automation, autonomous, robotic, and vehicle systems ensures solutions amplify, augment, empower, and enhance human performance. We understand what an operator needs to comprehend machine intent, informing the alignment of system capabilities with operator expectations. Our solutions incorporate human-centered and teamwork-centric artificial intelligence and advanced autonomy systems to meet the needs of the mission and concept of operations (CONOPS).

Building on our human-signals expertise, Draper implements artificial intelligence and machine learning to create computational team models, individual-based and personalized models, mixed initiative decision-making on human-machine teams, and social signal processing.

Our position, navigation, and timing (PNT) solutions enable tracking and navigation for humans and/or autonomy—including human-machine teams and human-in-the-loop systems—inside buildings and other fixed structures, as well as spacecraft and ships where the signal must move relative to its position over time.

For example, we developed a robust algorithm for autonomous vehicles. Known as SamWISE, the algorithm integrates data from an inertial sensor and one or more navigation sensors—e.g., a camera of a LiDAR unit—to estimate the vehicle’s attitude, velocity, and relative position and orientation.

We also designed an AR-based technology—dubbed Monarch—that allows for vision-aided navigation without GPS, supporting human navigation in areas where there are no maps or GPS signals to help guide them. Built-in software algorithms rely on real-time visual and inertial data provided by robust sensors. Demonstrated as part of the Department of Defense Thunderstorm program, Monarch can integrate GPS or LIDAR data, but in the absence of such sources, it provides navigation relative to a starting location. As part of a heads-up display, Monarch’s AR technology supports human navigation and situation awareness in potentially dangerous or rapidly changing environments, such as urban combat or disaster response.

Further, we are supporting DARPA’s Competency-Aware Machine Learning (CAML) program, which aims to design AI that is aware of and able to communicate its competency and the likelihood that it can successfully complete a task, facilitating the development of trust between the AI and humans. On DARPA CAML, Draper is developing tools to allow autonomous systems based on machine learning to communicate their competencies to human users.

Draper UI/UX experts ensure that all our digital and physical solutions are human-centered, designed for simplicity, efficiency, error reduction, responsiveness, and adaptability. Where appropriate, we incorporate multimodal channels to provide the user with contextualized and easily interpreted information while filtering out noise and distractions.

We employ an agile design and development approach, rapidly iterating the design with users throughout the development process.

-

User research and observation: Our design team captures a systems-level view of the user’s needs, preferences, tasks, workflows, and environments, including cognitive and physical operational constraints.

-

Task flow models and requirements: We categorize and prioritize user goals to define operational use cases, information flows, and user actions. We review this initial design plan with users to confirm operational fit, functionality, accessibility of critical decision-making information, and desired future scope.

-

Product design: As the design evolves, we iterate with users to ensure safe and effective operation, avoiding flaws that might prevent adoption and building user buy-in.

-

Testing and validation: We employ manual and automated UI-based regression tests to identify and eliminate bugs and maintain feature functionality.

By combining user input with observational research, our UI/UX experts can design solutions that meet user needs, even those the users cannot articulate.

Draper harnesses the power of visual analytics, information visualization, and 3D experiential design in service of our clients' mission-critical technology programs. Our technologies provide egocentric situation awareness in the moment as well as reach-back capabilities for wider mission awareness. Our XR and visualization solutions are tailored to support mission-critical training and operational goals, helping users learn rapidly and safely, perform tasks faster, and reduce errors.

Extended Reality (XR) displays provide opportunities for 3D visualizations, both virtual and integrated with the real world, providing unprecedented access to visual experiences that immerse and engage users with innovative interaction capabilities. Draper designs and develops user-centered virtual reality (VR), augmented reality (AR), and mixed reality (MR) solutions for complex, dynamic task environments. We go beyond visual XR, creating effective and meaningful immersion that leverages speech, gestural and eye gaze input, 3D spatial audio, and haptics—when appropriate—for enhanced awareness.

Draper leverages their user-centered design and development expertise to ensure XR solutions are appropriate for the mission and are designed to minimize distraction and maximize performance outcomes. While advancing technical solutions can be seen as unnecessary bells and whistles, Draper ensures that when XR solutions are implemented, it is because they best support users, tasks, and missions within the operational environment and provide a force multiplier to performance outcomes.

Draper not only designs for XR but also implements information visualization solutions across any display technology, offering enormous benefits in technology design and development. Visual simulations can streamline the design process, and well-designed experiences and dashboards dramatically enhance utility, decisions, efficiency, and mission effectiveness. Draper transforms complex data into accessible, actionable insights and information that meet the needs of analysts, systems engineers, users/operators, and stakeholders. Our data visualization experts enable clients to see and understand trends, outliers, and intelligence to support data-driven decisions.

Draper has developed an augmented reality prototype to address a difficult warfighter challenge. The Enhanced Decision Support for Tactical CBRN Operations (EDS TACO) technology has provided a way to limit their exposure to environmental threats, while still providing adequate training in threat detection and navigation. Draper engineers also devised a way for an augmented reality (AR) system to work accurately without GPS over large areas of operation.

Draper offers expertise in measuring, monitoring, and modeling human signals—physiological and biological indicators of human states that affect behavior and performance.

We design solutions leveraging multimodal signal capture, providing multiple indicators across observable behaviors and ‘unobservable’ responses via cardiovascular, musculoskeletal, and neurological signals. Such signals are analyzed via appropriate time-synched data fusion methods and used to not only assess but also predict performance outcomes to drive adaptive system design.

For example, as part of the U.S. Army’s Monitoring and Assessing Soldier Tactical Readiness and Effectiveness (MASTR-E) study, we developed meaningful metrics for soldier performance. Our experts conducted design experiments and field tests, collecting hundreds of types of data to inform human performance and workload models.

As part of NASA’s Extreme Environment Mission Operations (NEEMO) program, we demonstrated wearable kinematic sensor (WKS) technology in a relevant operational environment. Designed to improve life and performance for astronauts on the International Space Station, WKS tracks crewmembers’ position and orientation during normal activities. The device also measures localized carbon dioxide, which provides important insights to inform the design of habitable volume layouts for future spacecraft and to understand how crews can be tasked for maximum efficiency.

Draper has also evaluated a variety of human biological and physiological signals, isolating key indicators of deception. Our team developed a machine learning (ML) engine, hardware, and software to field an experimental kiosk used to collect data from thousands of study participants. Evaluation studies determined that Draper’s design outperformed humans at detecting deception, making it a promising solution for homeland security and law enforcement.

Draper also worked on Immersive Situational Awareness Wear, called isaWear™, which is a group of wearables that present data to several senses to enable rapid understanding of information so that it can be acted upon quickly. This includes a thermal bracelet worn on a temperature-sensitive area of the wrist, developed by Draper in collaboration with Embr Labs.

Simulation-based models and visualizations provide evaluative capabilities across hardware and software components, integrated systems, and systems of systems. Our visual analytics and simulation visualizations are key enabling components for Situational awareness and decision support

- Human-machine teaming

- User-centered digital engineering

- Human-space systems

Draper creates virtual simulations, systems, and environments to support our customers’ program needs—including analysis, hardware-in-the-loop, and human-in-the-loop evaluations.

Our proprietary simulation framework can be integrated with commercially available tools, enabling fully extensible designs. Initially developed for the U.S. Navy, the Draper Simulation Framework has supported Hardware in the loop (HWIL), Software in the loop (SWIL) and Human in the loop (HITL) simulations. The framework enables realistic concept visualizations at optimal fidelity for cost-effective decision support at every phase of the product life cycle.

- Trade space exploration

- Design parameters and requirements definition

- Functional modeling

- UI/UX and system design iterations

- Human performance assessments

- Subsystem validation and verification

- System requirements verification

- Mission validation

Dedicated, Model-Based Engineering Laboratory

Draper’s dedicated, model-based engineering lab enables physically accurate sensor simulation, engineering visualization, and human factors pilot displays—from two-dimensional user interfaces to 3D high-fidelity image generation. We offer the full range of display equipment, tools, and rendering engines:

- 4K quad display for warp and blend curved projection

- 4K video playback, recording, and switching

- GenesisIG

- VR Vantage

- EDGE

- Unity

- Unreal

- Open Scenegraph

- GLStudio

- Qt

Training and Operational Displays

We create sophisticated terrain simulations for training or operational displays. Our simulation experience spans multiple space missions, including the Apollo and Artemis lunar landings, the ARES Mars scout airplane, and the Dream Chaser spaceplane. We also created simulations to test vision-based navigation technologies such as the

Our experts can adjust real or simulated camera data for terrain, biome, season, weather, time of day, and modality (infrared or visual), and we can add buildings, roads, and other details using geo-specific CIGI models or photo-realistic representations.

Virtual Environments and Digital Twins for Rapid and Cost-Effective Development

We can design virtual environments and digital twins that interface with hardware and software in the loop. A digital twin can evolve alongside the technology, beginning with generic models in the early design phases and upgrading as development proceeds. The result is a robust simulation that can support 2D or 3D mission visualization for planning, training, demonstration, and/or validation and verification.

For example, we are developing modeling and simulations to analyze sensors, systems, and mission scenarios to support the development of resilient position, navigation, and timing (PNT) technologies. By enabling the execution of the complete design and development process in a unified virtual environment, our Multi-Domain Quantitative Decision Aid software will help identify and prioritize the most effective technologies.

In military operations, space missions, and disaster response, success hinges on all actors—humans and autonomy—understanding what is often an extreme and rapidly shifting environment. Draper designs technologies for situation awareness and decision support that deliver that understanding.

Our human factors experts, engineers, designers, and developers create first-of-a-kind solutions that provide the accuracy and reliability required when lives and mission success are at stake and quick, effective decision-making is paramount.

We excel at defining complex, contextual requirements—determining what information the user needs and when under what constraints, which drives how and in what form the information should be provided. We engineer for smart data integration, robust analytics, and modularity, ensuring that our solutions deliver for the client, the mission, and the user.

By bringing human-systems engineering and user-centered principles into the early concept and design phases, we can minimize the need for re-engineering while also allowing for updates as use cases and scenarios change.

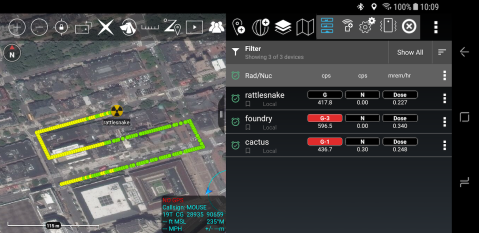

Since 2010 Draper has been working to advance situational awareness, through providing enabling technologies for the map-based communications tool kit for mobile devices known as Tactical Assault Kit (TAK).

Draper has also developed an AR prototype to address a difficult warfighter challenge. The Enhanced Decision Support for Tactical CBRN Operations (EDS TACO) technology has provided a way to limit their exposure to environmental threats, while still providing adequate training in threat detection and navigation. It leverages the Android Tactical Assault Kit (ATAK) platform, CBRN Plug-In, and HoloLens technology.

Draper developed the Maritime Open Architecture Autonomy (MOAA) platform for the U.S. government as a government-controlled, configuration-managed, extensible open architecture for mission autonomy designed to bring greater flexibility in mission design, operation and resource deployment on any unmanned underwater vehicle. Draper engineers also devised a way for an augmented reality (AR) system to work accurately without GPS over large areas of operation focusing specifically on sailors at sea.

The goal of developing technical solutions and/or providing training is to establish or increase knowledge and performance capabilities, with improved performance realized in an operational setting. But quantifying the impact of such solutions in operational terms is oftentimes seen as unachievable. Stakeholders are left to make acquisition decisions based on requirements met, not on how much of an impact a given solution will have on operations.

By starting with integrating clear measures of operational impact right at the beginning of an agile product development lifecycle, insightful supporting and transfer documentation can build knowledge and skills based on clear objectives that directly leverage those measures of impact. This can then be assessed using an incremental approach, and the documents become readily adaptable to formal user-centered requirements.

By implementing the key steps, one can best quantify the learning impact on the individual, team, and organization. (1) Clearly define the identified performance gap in terms of operational impact; (2) develop impact-based learning and performance objectives to address the gap; and (3) establish clear metrics to measure achievement of objectives and anticipated performance outcomes. To be successful, evaluating operational impact requires a transparent upfront analysis.

Through an operational impact analysis, one can align business indicators with skills, knowledge, and capabilities gained and provide quantitative validation that the solution will have the desired impact. Stakeholders want to see an impact in terms of time, lives, or money saved. Incorporating user-in-the-loop evaluations and implementing key metrics of success during early product releases can provide operational impact indicators and not only show the potential value of the training solution but also guide development in identifying opportunities for increased training transfer capabilities, often in a more compressed timeline to sustainment.

Related Solutions

Tactical Assault Kit (TAK)

How is Draper enhancing situational awareness and decision support—while reducing cognitive load and task burdens?

Enhanced Decision Support for Tactical CBRN Operations (EDS TACO)

How can we empower trainees with near-practical experience without real world exposure to environmental threats?

Autonomy in Complex Environments

How can autonomy enhance soldiers’ situational awareness while reducing cognitive burden?

SAMWISE

How can autonomous vehicles navigate unknown environments without relying on GPS?